At 91, John Chowning D.M.A. ’66 still goes home and composes music after a long day. “I’m not a composer,” he told The Daily. “I’m a musician who loves to compose using computers.”

On Feb. 1, the Stanford professor emeritus of fine arts received the 2026 Grammy Technical Award for a discovery he made nearly six decades ago: frequency modulation synthesis, which allows for complex, bright, metallic synth sounds.

Chowning called the Grammy “an award I never sought.”

“Most Grammys are given to people who would love to have a Grammy,” he said. “Performers.” The televised spectacle of pop stars feels far removed from the punch cards and late-night programming sessions that shaped his career. But behind the scenes, Chowning’s work quietly redefined the sound of modern music.

Chowning completed his Doctor of Musical Arts degree at Stanford in 1966 — but before Palo Alto, he spent three formative years in Paris studying with composition teacher Nadia Boulanger. It was in Paris, then considered the epicenter of avant-garde music according to Chowning, where the professor emeritus first encountered the work of German composer Karlheinz Stockhausen.

In a Parisian concert hall, Chowning heard Stockhausen’s music projected through loudspeakers surrounding the audience, an early example of spatialized sound. Although multi-speaker home studios are common today, the practice was unusual at the time. This experience lingered, and inspired Chowning’s own interest to “physically move sound through space.”

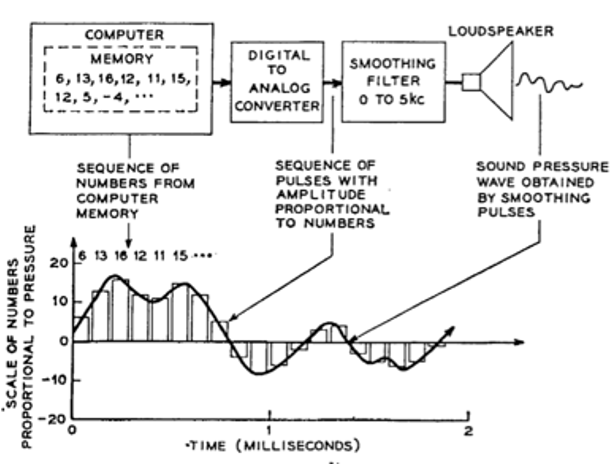

When he arrived at Stanford, however, he was disappointed to find “no interest in electronic music of any sort.” A turning point came when a colleague handed him a Science article by Max Mathews — Chowning’s future peer — titled “The Digital Computer as a Musical Instrument.” Prior to this, Chowning had no formal scientific training. “Music was my life,” he said. But one diagram from the article seized his attention: a computer, a digital-to-analog converter and a loudspeaker.

If he could learn to program, Chowning realized, he could bypass the technical apparatus required in European electronic studios, then go “straight from imagination to sound,” he said.

Chowning enrolled in a programming course at Stanford and began working at Stanford’s Artificial Intelligence Lab during his years as a doctoral student. Back then, programs were stored on unnumbered punch cards. “Don’t drop the cards,” Mathews once warned.

Chowning described himself at the time as a musician asking “naive questions” among scientists. The researchers, intrigued by his work generating sound from code, helped him understand the mathematics behind acoustics and motion, even sitting him down to explain formulas when needed.

In 1967, while experimenting with spatialization and timbre, Chowning discovered frequency modulation (FM) synthesis, a method of generating complex tones from simple waveforms.

59 years later, it earned him a Grammy.

When Yamaha released the DX7 synthesizer in 1983, built on FM technology, Chowning became its public face. But he is quick to redirect credit.

In 1972, around 100 Yamaha engineers began working toward a real-time digital synthesizer, years before the technology made it feasible. “I’m proud to be associated with it,” Chowning said, “because it democratized computer music.”

Before the DX7, digital synthesis required multimillion-dollar institutional computers at places like Stanford, Bell Labs or MIT, according to Chowning. But after 1983, a composer could purchase a DX7 and a small personal computer for roughly $2,000 and build a powerful digital workstation at home. Computer music expanded rapidly, and the instrument became a staple of 1980s popular music.

Meanwhile, Chowning was building a program for computer music at Stanford. In 1964, he began what was then called the Computer Music Project. Today, it is formally known as the Center for Computer Research in Music and Acoustics (CCRMA).

CCRMA’s influence soon extended internationally. In 1972, composer György Ligeti visited Stanford and heard Chowning’s spatial experiments. “No one knows about this in Europe,” Chowning recalled Ligeti telling him. Ligeti then urged his colleagues abroad to “pay attention to what is going on at Stanford in computer music.”

That message reached French composer and conductor Pierre Boulez, who invited Chowning to join the planning committee for IRCAM, a major music research institute in Paris that opened in 1977. Stanford’s work helped shape IRCAM’s technological foundation.

When Chowning retired in 1996, his former student Chris Chafe ’83 succeeded him as CCRMA director and now chairs Stanford’s music department. Chowning still attends student presentations and remains closely connected to the center. In a press release, CCRMA called Chowning’s Grammy “a testament to a lifetime of innovation that has empowered generations of musicians, producers and sound designers worldwide.”

Zachary Guo ’28, a fellow composer himself, was still in high school when he first learned about CCRMA and Chowning’s “revolutionary contributions” to music.

“Digging further and learning more about CCRMA was one of the largest factors [that convinced] me to apply to Stanford,” he told The Daily. Last summer, Guo took a production class at CCRMA: “The opportunity to interact with so much technology as someone with little computer music background was like entering a whole new universe,” he said.

Retirement has not slowed him. In 2019, Chowning helped assemble an international research team, including collaborators from Norway and France, to study the acoustics of Chauvet Cave in southern France, home to 36,000-year-old wall paintings. “What did these Aurignacian people hear when they made these paintings?” he asked. “Ritual is always accompanied by sound, except for prayer.”

No instruments survived from the period. Working out French conservation laws, which prohibit disturbing the cave floor, the team used photogrammetry and computer modeling to digitally subtract thousands of years of mineral buildup and reconstruct the cave’s original acoustics. “We can hear what [the Aurignacian people] heard,” Chowning said.

Despite living in Silicon Valley’s backyard, Chowning remains skeptical of generative AI in music. “I’m as interested in using computers for composing as Katie Ledecky is interested in a robot swimming in half her record time,” he said. “She loves to swim, and I love to compose.”

In his current work, Chowning will use AI tools for efficiency, but doubts their role in creative authorship. “Music creation is dependent on individual imaginations and people who connect with other people.”

The ones who succeed, he believes, are those who persist. “I keep going and I learn.” For Chowning, a computer language is to a composer what natural language is to poetry: “an incredibly rich resource,” built from generations of thought. At 91, he is still exploring it.