On Stanford’s campus, “ChatGPT” has become a verb.

As a linguistics major, I love everything related to language; I could talk for days about words and how we use them. Linguistics, unlike English or language arts courses, is historically very open to innovation and “bad” grammar. In the field’s academic tradition, anything can be a word. The only requirement is that it gets used enough and people generally accept it to have a consistent meaning in communication.

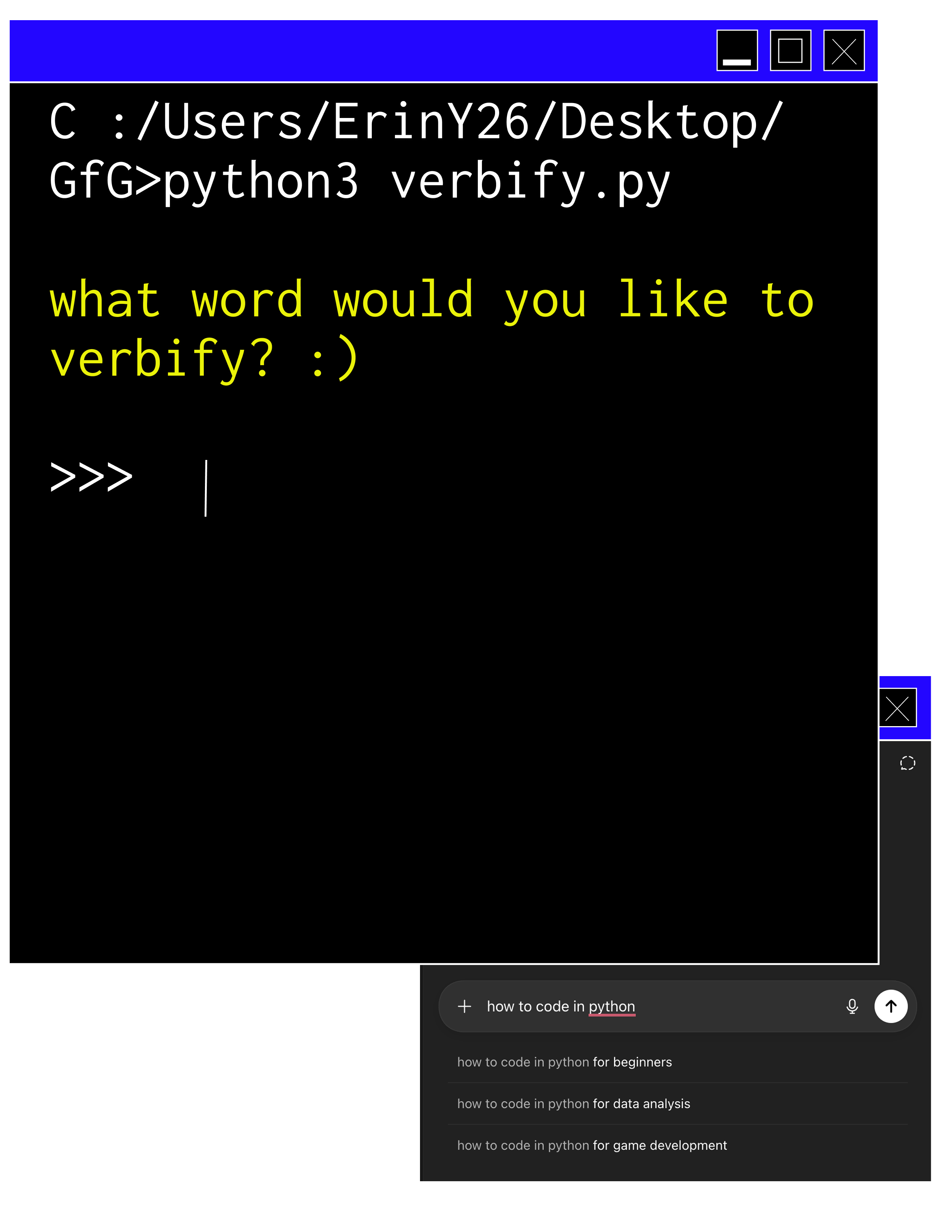

A few examples from my text message history make it clear that “ChatGPT” has crossed this threshold of acceptability:

“i’ll try to gpt it,” I wrote to an artistically challenged group chat about sketching a design for our house’s Big Game banner.

“MAYBE CHAT GPT IT,” my friend recommended after I sent a picture of my mystery allergic reaction.

“another hard day of chat gpting,” my friend joked about doing menial work during her summer internship.

“let’s go through and do some de-chat-gpt-ing of the paragraphs,” one of my group project partners recommended as we were doing final touch-ups on our report.

I’m a big fan of proprietary eponyms: words that started as brand names but eventually developed into generic definitions. Jello, band-aid, and granola are a few of my favorites, and it seems like ChatGPT might be poised to make the list.

Ask any of my friends from whom those text messages were sourced, and I can promise none of them would be able to tell you that GPT stands for generative pre-trained transformer. But all the same, I doubt many of us would be able to say we knew that “radar” stands for (ra)dio (d)etection (a)nd (r)anging. Language evolves to keep up with the times, and human use dictates what words end up meaning.

What can we make of this phenomenon? Is it a sign that OpenAI is winning the arms race? You never hear people say, “I’m gonna Claude my homework” or “Let me de-Gemini this.” It’s reminiscent of how “Google” became a verb in people’s vocabulary at the same rate that it became essential to life in the 21st century. But then again, maybe not — when I was interning at a bank this past summer, we got cleared to use Microsoft Copilot in our work (which, I admit, is also powered by OpenAI, but that’s besides the point), and I saw the same verbification take place. My older coworkers would use the feature to generate meeting summaries and write Excel functions, and then would turn to the person next to them and brag, “Let me show you what I just Copilot-ed.”

Compared to other proprietary eponyms, there’s a lot more to “ChatGPT” as a word than its use as a verb. I’ve seen it personified into a secretary of sorts. The text message example below was my first time seeing Chat GPT get gendered:

“I just asked chat gpt and basically she said to just do…” said a classmate in response to my question about a homework assignment.

Even more common are agentic uses of “ChatGPT” as the subject of a sentence. People talk about GPT like it’s a person who has feelings, strengths, and shortcomings, which feels markedly different from how we talk about Google (or Jello or band-aids, for that matter). Others use its “first name,” saying “Chat” for short. I notice this comes up more when ChatGPT is a character in the sentence, rather than the verb (maybe because chat itself is already a common English verb). It would not surprise me if, soon, the Webster’s Dictionary definition of the noun “chat” has an additional definition.

- idle small talk:chatter

- light informal or familiar talk especially:conversation

- [imitative] any of several songbirds (as of the genera Emarginata or Myrmecocichla)

- online discussion in a chat room

- shorthand for ChatGPT, a generative AI conversational model

Unlike Google, ChatGPT can “mess up,” or “not understand” what we mean when we try to ask for help. But it can also “come up with” new ideas that we wouldn’t have thought of ourselves. This defines the difficulty in how we talk about AI. Who are we giving credit to? Did I ChatGPT the idea, or did ChatGPT hand it to me on a silver platter?

Others have written about the verbification of ChatGPT, but only from the perspective of branding. What I see in how people talk about ChatGPT is a measurement of sentiment. When people use it as a verb, there’s something to be said about how Stanford students make use of AI in our everyday lives. I have friends who embrace it wholeheartedly; they listen to AI-generated music, vibe code personal projects, and use voice-to-text to talk to ChatGPT when they have questions. I also have friends who resist AI completely for various reasons: ethical, environmental or because they just don’t feel like they need it. Interestingly, I haven’t noticed much of a split across major or gender. I have friends in the humanities who swear by ChatGPT or Claude to revise their papers; I have friends in engineering who won’t let AI anywhere near their problem sets.

“I actually HATE chat gpt and never use it for anything and I am about to use it for this class,” texted a frustrated friend from high school who was complaining to our group chat about an incompetent professor.

I recently did a user interview with an AI startup in exchange for an Amazon gift card, and I was asked if I thought most college students were afraid of AI taking our jobs. I said that for most Stanford students, the answer would be no. Those that don’t use AI have enough self-assuredness to feel they can make it because their quality of work is better than automation. Those that use AI feel a sense of control over the situation. They are the lucky “AI-natives” that LinkedIn posts love to talk about, people who are trained to use these tools to make themselves more efficient and indispensable, rather than get left behind.

One thing I love about language is its flexibility. Humans have been iterating and inventing new words and grammatical structures since the beginning of time. Even in the same language, a sentence that makes perfect sense to a group in Philadelphia might sound like complete nonsense to English speakers in Belfast, and vice versa. Large language models are trained on human data, and they can certainly get very good at mimicking how we talk. I’ve seen the way ChatGPT has influenced language use — I find myself wanting to use em-dashes far more than I ever remember wanting to in the past. That being said, I believe in the first-mover’s advantage, in that you can’t come up with a complete innovation purely based on prior knowledge. New uses of language will always come from people on the ground, who are living with and negotiating new world knowledge in how we communicate. I like to think that the way we use language mirrors the way the world works at large.

Notably, a human can ChatGPT something, but ChatGPT can not “human” the same thing.