One of the biggest limitations of today’s AI systems isn’t intelligence — it’s memory.

Despite rapid advances in large language models, most AI agents still “forget” prior interactions once a session ends, forcing developers to rely on short-term context windows or token-heavy workarounds that don’t truly scale.

EverMind, an AI infrastructure company, is taking aim at this problem with EverMemOS Cloud, a production-grade memory infrastructure designed to give AI agents durable, coherent, and continuously evolving long-term memory. Unlike standard retrieval-augmented generation (RAG) or extended context windows, EverMemOS mimics biological memory formation.

Why it matters:

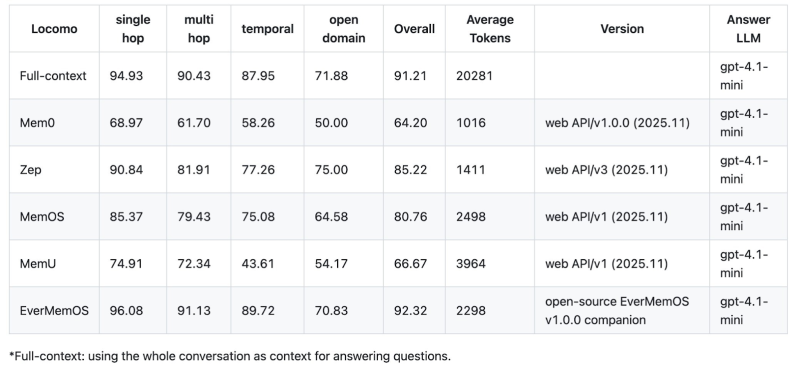

- Accuracy: The system achieves 93.05% accuracy on the LoCoMo (Long-term Conversational Memory) benchmark, one of the top performers among existing systems in multi-hop and temporal reasoning.

- Efficiency: It drastically reduces token usage while boosting intelligence, delivering a 19.7% improvement in reasoning capabilities compared to standard models.

- Other Benchmarks: The system also achieved leading performance on three additional benchmarks: LongMemEval (83% accuracy), HaluMem (90.04% recall), and PersonaMem v2.

The Memory Genesis Competition 2026

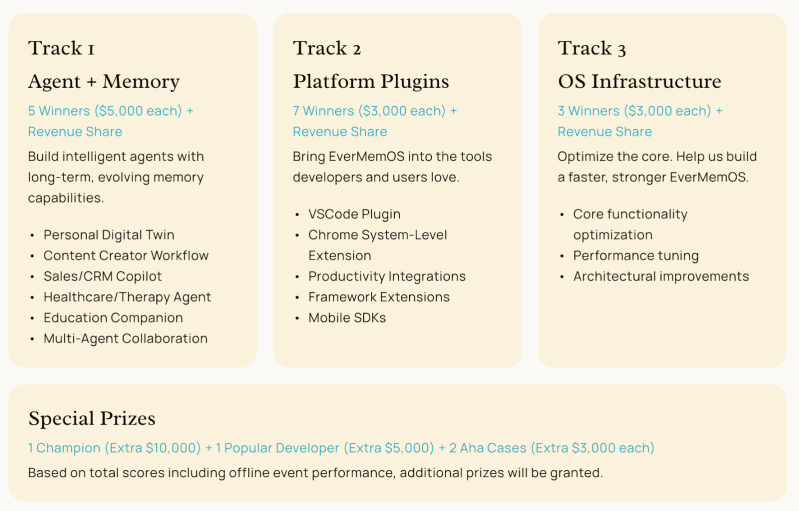

The Memory Genesis Competition invites academic researchers, student builders and industry engineers to utilize EverMemOS. Participants are able to join three tracks: 1) Agent + Memory 2) Platform Plugins and 3) Operating System Infrastructure. With an $80k+ prize pool backed by OpenAI and Amazon Web Services, this is a competition that Stanford affiliates cannot miss.

Finalists will pitch their projects to a panel of judges at the Computer History Museum in Mountain View, CA. The competition will conclude with an awards ceremony there on April 4, 2026.

Don’t pass over the opportunity to work with new, state of the art AI infrastructure! Enroll today.