At the core of all human interaction is communication. But over the past four years, a fundamental shift has snuck up on us. After the introduction of ChatGPT and other Large Language Models (LLMs), the way that we write emails, the vernacular we use, even the means by which we gather information have been fundamentally altered. Suddenly, it’s become difficult to discern between a “human” voice and an “AI-generated” one. Purposely forgetting a comma or incorporating an auspicious word or substituting an em dash is how we prove that we are human — that we took time to draft our work, our correspondence.

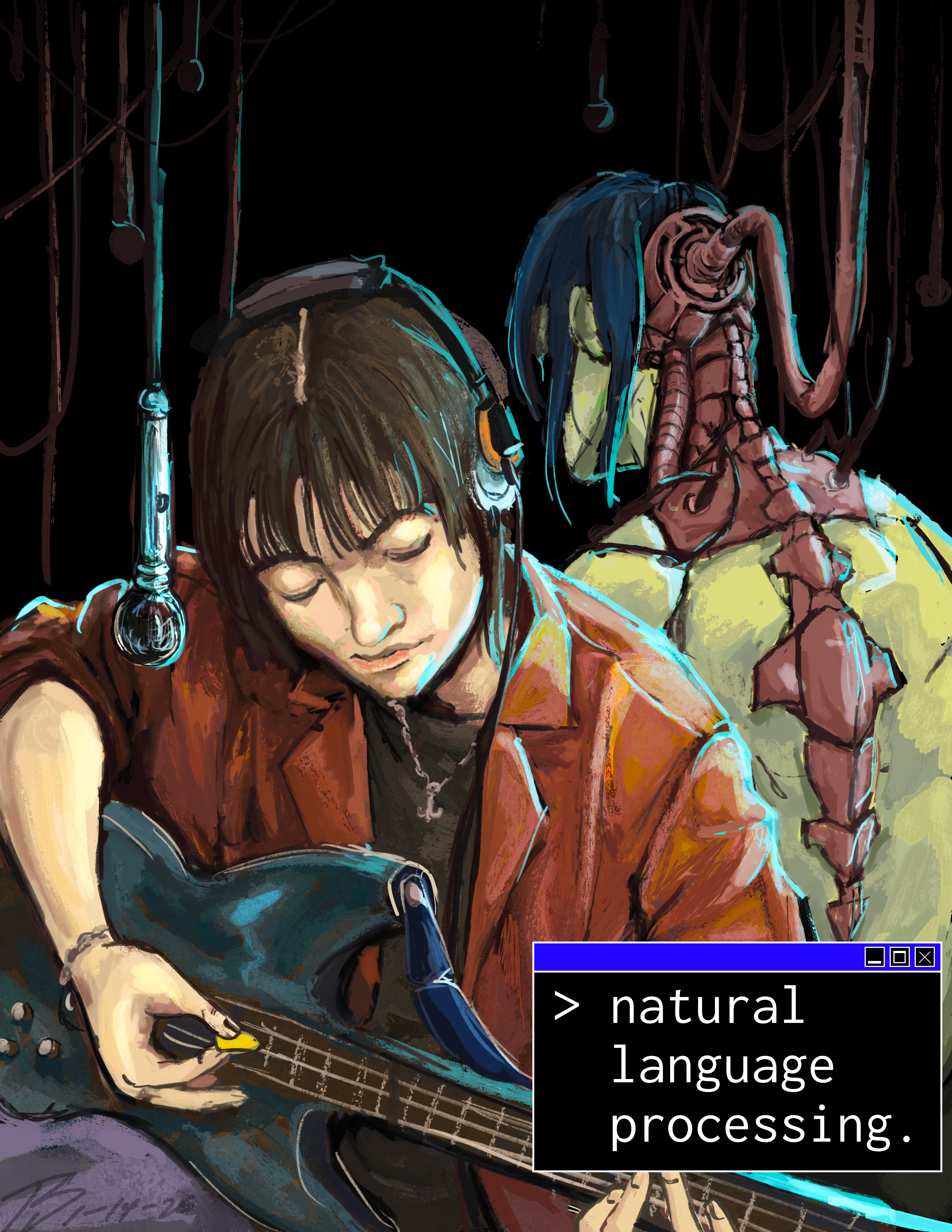

We came into this issue knowing that we wanted to address the topic of AI head-on, specifically the impact of LLMs. When Stanford students think about natural language processing, terms like neural network, pre-training data or tokenization probably come to mind. But as we began ruminating on the term itself, we suddenly realized that it has a dual meaning.

Our issue, titled “Natural Language Processing,” is about AI, but it is also about how we, as humans, process our own natural language: how the words we use alter the ways we see each other and the world around us. This issue has evolved far beyond the simple concept we ideated back in July. It is the product of a collective imagination. After all, interviewing and writing and editing and redrafting and discussing and celebrating and folding over with laughter and welling with tears — these are all forms of natural language processing.

And through this processing, we exercise our ability to connect with one another, which is what ultimately separates us from our AI counterparts. Our capacity to read through a piece and sit deep in the rut of someone else’s perspective, to feel the emotions that prompted their beliefs, to relish in the beauty of their mind’s prose, remains a defining and stunning human characteristic.

Charlotte Cao ’27 & Callia Peterson ’26

Vol. 268 Magazine Editors