“We’re not necessarily going to find the solution today,” said co-director of the Stanford Human-Centered AI (HAI) Institute Fei-Fei Li to a packed Memorial Auditorium, filled to its 1705-seat capacity. “But can we involve the humanists, the philosophers, the historians, the political scientists, the economists, the essayists, the legal scholars, the neuroscientists, the psychologists and many other disciplines into the study and development of AI in the next chapter?”

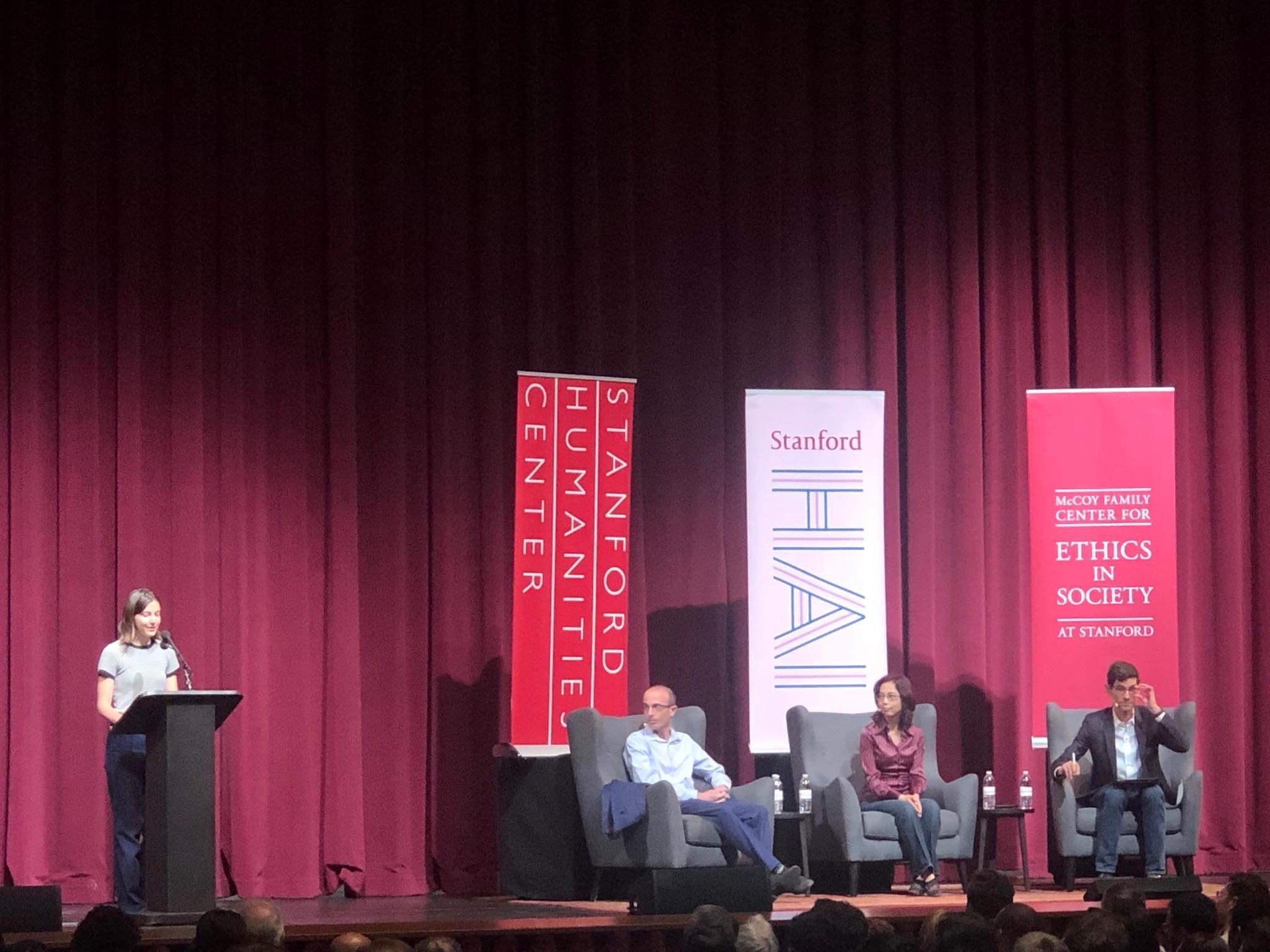

Collaboration between computer scientists and the rest of the academic community was a central theme of Monday evening conversation between Li — a renowned artificial intelligence (AI) researcher and computer science professor — and celebrated AI theorist Yuval Noah Harari. The talk, moderated by editor in chief of WIRED Nicholas Thompson ’97, unearthed issues of rapid development and deployment of AI technology as well as the impact it may have on human agency and democracy in the 21st century.

“There is a lot of hype now around AI and computers,” Harari said. “But that is just half the story. The other half is the biological knowledge coming from brain science and biology, and once you link that to AI, what you get is the ability to hack humans.”

Harari, a history professor at the Hebrew University of Jerusalem and two-time winner of the Polonsky Prize for Creativity and Originality, drew on his experience writing about some of the most pressing issues of the modern world, from artificial intelligence ethics to climate change policy. He is the author of international bestsellers “Sapiens: A Brief History of Humankind,” “Homo Deus: A Brief History of Tomorrow” and most recently, “21 Lessons for the 21st Century.”

“Any technology when it was created starting from fire is a double-edged sword,” said Li. “It can bring improvements to life, to work, to society, but it can bring perils.”

“More and more of your personal decisions in life are being outsourced to an algorithm that is so much better than you,” Harari said. “We have a dystopia of surveillance capitalism … decisions like where to work, what to study, who to date, who to vote for.”

Harari discussed the risks of AI, and the sheer complexity of the issues it presents. Harari sees science as “getting worse and worse at explaining its findings with the general public, which is the reason for things like [people] doubting climate change.”

“And it’s not even the fault of the scientists,” he added, “because the science is just getting more and more complicated.”

After Harari considered the philosophical and ethical implications of rapid developments in AI, Li concluded the talk with a discussion of multidisciplinary collaboration as a means of continuing measured progress in the field.

“[We need to] welcome the kind of multidisciplinary study of AI cross-pollinating with economics, with ethics, with law, with philosophy … there’s so much more we need to understand about AI’s social, human, anthropological, ethical impacts, and we cannot possibly do this alone.”

Contact Shan Reddy at rsreddy ‘at’ stanford.edu.